I’ve written at length about sponsorship measurement in books and blogs, but I’ve never really addressed why sponsorship measurement so often, and persistently, goes awry.

I’ve written at length about sponsorship measurement in books and blogs, but I’ve never really addressed why sponsorship measurement so often, and persistently, goes awry.

It’s a little concept called “confirmation bias”.

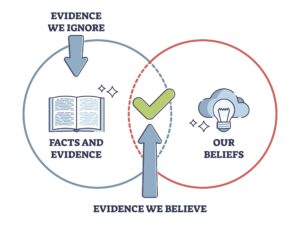

“Confirmation bias” is the tendency for people to seek out and interpret information in a way that confirms that either what they already believe is true, or what they hope is true.

There are a number of reasons why a sponsorship professional – who really should know better – would rather have ineffective measures that show success, than effective measures that might not:

Confirmation bias works against your real results, and against the best interests and credibility of the sponsorship industry, in a number of ways.

“Can you name the sponsors of this event?”

Never has there been a more misguided question asked in sponsorship research. Ugh.

There was a study a while back that figured out how people answer that question. They think of the categories of sponsors that are probably involved, then add telecommunications, insurance, airlines, and banking (because they sponsor everything). Then, they name either the market leader or the one they use. I will say that this was done in the absence of any major, onsite sponsor leveraging, so it really was more of a visual memory test than a measure of impact. But it was a memory test that people failed right-left-and-centre.

Asking this question as a gauge of sponsorship success makes as much sense as an airline gauging their success by asking visitors to an airport to name all of the airlines serving that airport – nothing about who they would fly or why, or what’s important to them, and which airline they think aligns best with that. Just names, and they’ll name all of the biggest ones, then add the ones they’ve flown or had some personal experience with, throw in an outlier or two, and that will be it.

You think I’m wrong? Go ahead and look at the inevitable polls leading up to Olympics or World Cups; the ones that ask people to name the sponsors. The companies and brands listed invariably include the biggest brands in that country – including the biggest telecom, biggest airline, biggest bank, and usually Coca-Cola – whether they are actually sponsors or not. And the media often labels the ones that aren’t as “ambushers”, and the sponsors who aren’t on the list as “losers”, whether any of them deserve those monikers or not.

Still think I’m wrong? Next time you go to a major sporting event with some friends, ask one of them to name the sponsors thirty minutes after you leave (and no helping from other friends). Chances are they’ll get a few right answers, a few wrong, they will almost all be huge brands, and they will name far fewer brands in all than were actually there.

But confirmation bias still makes this type of question appealing, especially for market leaders. They get big numbers because they’re big brands, often leading to the crazy, circular logic of making one of the primary objectives for the sponsorship being higher awareness of the sponsorship.

“Are you more likely to buy from one of our sponsors?”

“Are you more likely to buy from a company that sponsors the arts?”

If someone has had a good time, they’re likely to say “yes” to questions like this. They are. But does that correlate to actual preference a week or month or year later, when they’re making an actual purchase decision? Doubtful.

The one relating preference to generic sponsors of “the arts” (or “charity”) is common, but particularly ridiculous. People generally answer yes. But, think about it: What companies don’t have at least one charitable or cultural sponsorship on the books? It’s far too ubiquitous. It’s like asking, “Would you be more likely to purchase from a company that has employees?”

But because there is likely to be an overwhelmingly positive response to questions like this, it provides just the justification that some sponsors are looking for.

You remember logo counters… those companies that add up all of the logo exposure and put some kind of arbitrary dollar figure on it based on media equivalencies? Never mind that logo exposure as having any contributory value to achieving marketing objectives – changing people’s perceptions and behaviours around a brand – has been routinely debunked since 1991. But that’s exactly my point.

Confirmation bias is the only reason why these services still exist. People want to think they’re right, and logo counters are, above all else, the research equivalent of “yes men”.

It used to be that sponsors sought evidence that confirmed they were doing well. Now, those sponsors are accountable across multiple marketing objectives and have mostly realised that counting logos has nothing to do with their actual results. So what happened? The logo counters started working for properties, convincing them that the sponsors need this information to demonstrate that all those “logos on things” littering their crap proposals are actually worth something. They charge the rightsholders lots of money, and issue some worthless “valuation certificate” that sponsors take no notice of whatsoever.

Back to the sponsors… In my experience, sponsors who continue to use logo counting services…

Smart sponsors understand, respect, and enhance the experience of the fans. They know the fans and that experience, so they know what will be meaningful – what will nurture their relationship. For them, sponsorship isn’t a crapshoot. They go confidently forward with a plan that they already know is very close to the mark.

They aren’t afraid of real measurement, because they’ve done their homework and got strong internal buy-in from the experts across the company. And if something doesn’t go to plan, it’s not the end of the world and they will know what to do differently next time.

Those sponsors are measuring returns against a big range of marketing and business objectives. The internal stakeholders are nominating the objectives and benchmarks, and then using their mechanisms to accurately measure the impacts. Changes in perceptions are measured using a selection of the same questions used in brand tracking research, and changes are benchmarked against those ambient numbers. The sheer range and objectivity of the measures and stakeholders involved all but eliminate any trace of confirmation bias.

For more on this, read “Sponsorship Measurement: How to Measure What’s Important“.

If you’re interested in working with me, I can provide the following options. Just click through for more information, and drop me a line if you want to discuss.

© Kim Skildum-Reid. All rights reserved. To enquire about republishing or distribution, please see the blog and white paper reprints page.